Faster web apps by hiding processing time: a lightning talk

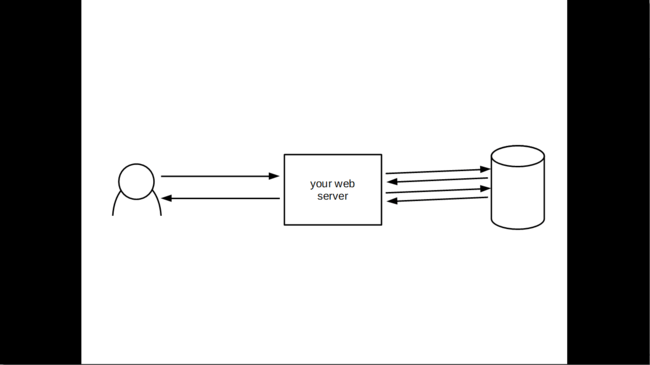

This is your web server:

This is a client, making a request to your server:

A web application consists of both the client and the server. The client might be a browser, that beautifully renders the HTML, CSS and Javascript you send back. The actual user interacts with the browser.

Sometimes a client doesn’t have a human user. It might be a different system.

Anyway, we don’t care about the user. We care about how fast your web application is. And right now, a client is making a request to your web server.

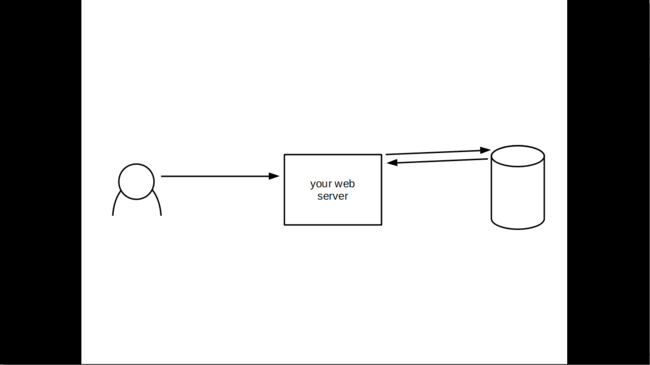

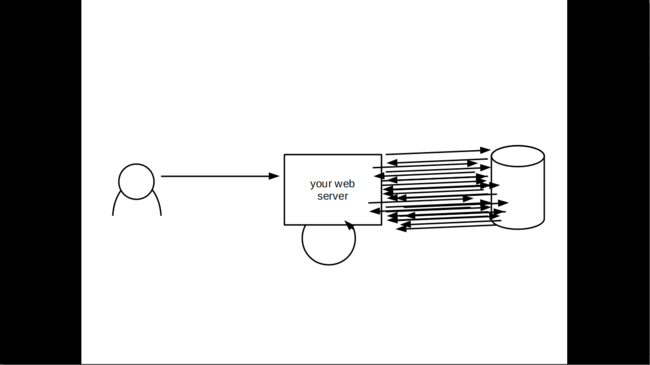

Your web server is eager to please. So it makes a request to your database:

Actually it needs a bit more information:

Now the web server has recognized the client. Let’s start getting the information we need to put together for a response to the request.

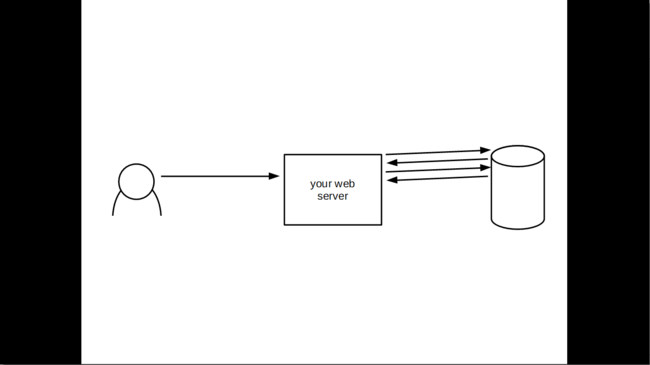

To some of you the server may seem extremely frivolous about its number of queries, but this is a fairly common occurrence in web applications today. They make a whole bunch of requests!

Anyway, the server now has the data it needs. The last step is transforming that into a response:

I call responses web objects, final bits of data that are transmitted across the web. The web object might be HTML, the data rendered in a template, or it might be JSON.

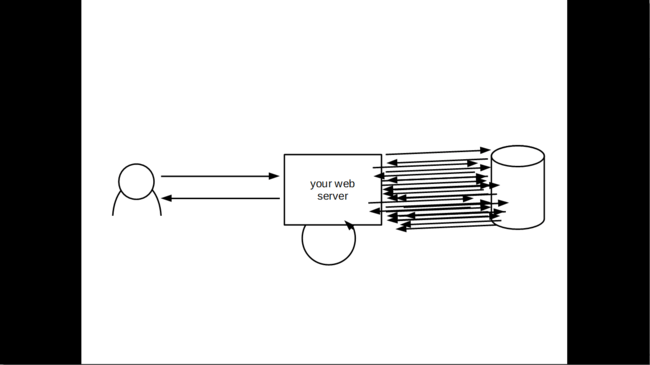

Finally we can send the data back to the client:

I’m almost exhausted from all this work! And look at the client, the client looks pretty dissatisfied with having had to wait for all that.

We don’t have to disappoint the client, though: we can save the client a lot of waiting time by cutting out the time spent on querying the database and transforming it into a response. We can do this by having already built the response that is needed.

That way, we only need to understand the client’s request — maybe a few queries to recognize them — and then send back an already prepared response:

Is that cheating? No more so than chefs on TV having already baked a pie! Trust me, it is better that way. You don’t need to sit around and wait for the dough to rise in order to understand how to make artisanal dinkel wheat bread. It’s a part of the process that has no value to the client.

We can reach this model by always building the response. Every time the underlying data changes, we build a new version of the page, and have it ready to serve up.

This might not make sense for all the web objects your web server serves, but for a bunch of them it will.

If you are running a newspaper, and a large part of the page is constant (like, you know, an article) this model works great.

Unlike traditional caching (like Varnish) we can use this technique for responses to modification requests, too. We can prepare all the possible success and error pages, and just send them back when we know which one we should send, instead of first having to generate them.

In fact, think about how many times the same page is rendered in your system:

How many times is the exact same page built by concatenating the exact same strings together in the exact same order? If your system has any kind of traffic it probably happens a lot.

All this time spent doing the same thing again and again can be saved altogether. And the time spent doing it the first time can be hidden from our users. They won’t have to wait for the web server to get its stuff together before it can send a response.

In short, we can hide as much processing time as possible from the user, in order to make them feel like we are providing a faster response.

We could do a lot better than we currently are doing with our web applications.

By saving on processing time, we will need smaller servers for our applications, and we will respond to requests more quickly. This will lead to fewer concurrent requests, which leads to even less computation on the server.

We should be able to make web application servers quite a lot faster.

Thanks for your time!

Of course I haven’t been able to go into much detail about how something like this would be implemented, nor have I been able to cover all the potential pitfalls and tradeoffs. This is a lightning talk! A quick overview of the idea, that might grab your attention.

I have written more extensively about the topic in Hide processing time from the user.

I also wrote an even earlier (and maybe slightly more practical — and definitely shorter) bit on the core idea of caching files statically on change, instead of building them on request, in Static pages in dynamic web apps.

There’s probably something I still haven’t covered. (I don’t feel like I am done with this topic yet!) Feel free to let me know if you have any questions!